- COVID-19 Presented Data Challenges and Opportunities

- Business Objectives Are Driving Data Transformation

- The Challenge of Digital Transformation with Legacy Systems

- Futureproofing with Trusted Data and Modern Applications

- Measuring the Business Value of Data Quality Initiatives

- How Data Management Can Manage Risk / Wrap-up

If you were unable to join us for this year’s CDAO Insurance Live, you missed a host of presentations from thought leaders across the insurance industry, including Aflac, Nationwide, Pacific Life and more.

I had the privilege of moderating a live, interactive panel discussion of executives at Northwestern Mutual, Farmers Insurance and Hartford Steam Boiler on understanding the “why” behind their organizations’ data management and analytics strategy.

Our panelists used their decades of experience as chief data officers and data management executives in the insurance industry to discuss:

- How business drivers determine data analytics strategy

- Building the foundations of a robust data governance strategy across the business

- Identifying how to extract value in the near term and get business buy-in

- How cloud adoption fits into a data management roadmap

- Modernizing practices and technology so it can be sustained into the future

If you missed the live event (or want to watch again), I have highlighted a few of the key takeaways below — and be sure to watch a recording of the event to get the full experience and hear all the insights from our expert panel.

COVID-19 Presented Data Challenges and Opportunities

The COVID-19 pandemic has brought on untold challenges and constraints on businesses and required them in many cases to completely re-think how their employees collaborate remotely, how they develop new customer touchpoints, and how to modernize service delivery in an online-first world.

“Here at Northwestern Mutual, we certainly saw it as an accelerant for our customer experience-led strategy,” said panelist Don Vu, Chief Data Officer at Northwestern Mutual. “We were already invested in creating a robust digital experience that enhanced and deepened the relationships between our clients and our advisers.”

So while the pandemic did not completely upend Northwestern Mutual’s digital underwriting and other programs, it served as a catalyst to accelerate their shift to serve more of their clients and advisers with machine learning and digital products.

Another panelist — Yorck Einhaus, SVP and Chief Data Officer at Farmers Insurance — was quick to mention, “We have to make sure that we can enable a digital world and that the customer gets what they need whenever and wherever they need it. And so it’s also been an accelerator to focus on our digital journey.”

Business Objectives Are Driving Data Transformation

We know that it’s crucial to develop a robust business case before embarking on a data management, digital transformation or master data management project. But what are some of the business objectives driving digital projects at some of the world’s most successful insurance companies?

“Where we’ve started with just about each one of our data efforts has been around a business use case,” said panelist Louis DiModugno, SVP and Chief Data Officer at HSB – a MunichRe Company. “It’s very hard to start from the other end of creating that data lake and then finding someone who wants to use it. So, again, we’re very focused on use cases and really focused on what those monitored values are going to be associated with delivering.”

“The focus areas for us are around promoting growth and improving the agent and the customer experience,” Yorck said. “And from a technical perspective, [we’re] trying to simplify and future-proof our landscape, getting rid of legacy [systems], dealing with sins of the past. And due to the constant changing regulations, we have to make sure that we have a solid and firm data governance model in place that protects the data that we have because data is not only our biggest asset, it is also one of our biggest liabilities.”

Don shared a similar sentiment: “Oftentimes, data governance is almost characterized as a defensive move. In many ways, we view it as a paved road where we want to work and collaborate with our Chief Privacy Officer and our friends in compliance and risk to put the appropriate controls and data management in place such that when we want to activate that data, it’s done in a way that complies with all laws and privacy obligations. We’re able to again facilitate and be an accelerant on the paved road for the business because we have these controls in place. In many ways our business strategy and data strategies are one and the same.”

The Challenge of Digital Transformation with Legacy Systems

Louis talked about the importance of cloud interoperability, and how to get all these things to work together. Don was quick to mention that unlike in a start-up environment, insurance companies are often working with both legacy and modern systems, platforms and applications when undergoing their digital transformation efforts.

“It really is a synergy in an ideal world if your roadmap combines the building of the new alongside the maintenance, enhancement and in some cases deprecation of the older ones — but the two of them have to go hand in hand,” Don said.

Louis mentioned that he and his team at HSB have looked at data virtualization to simultaneously optimize the new data systems while still connecting to the legacy environment when needed: “Our [virtualization] efforts have been around master data management, so we’re really about trying to find that one version of the truth or that one vision of a customer or their location or the objects that they’re insuring. We want to be able to see that across a number of different products and a number of dimensions — and so mastering is a big piece of it.”

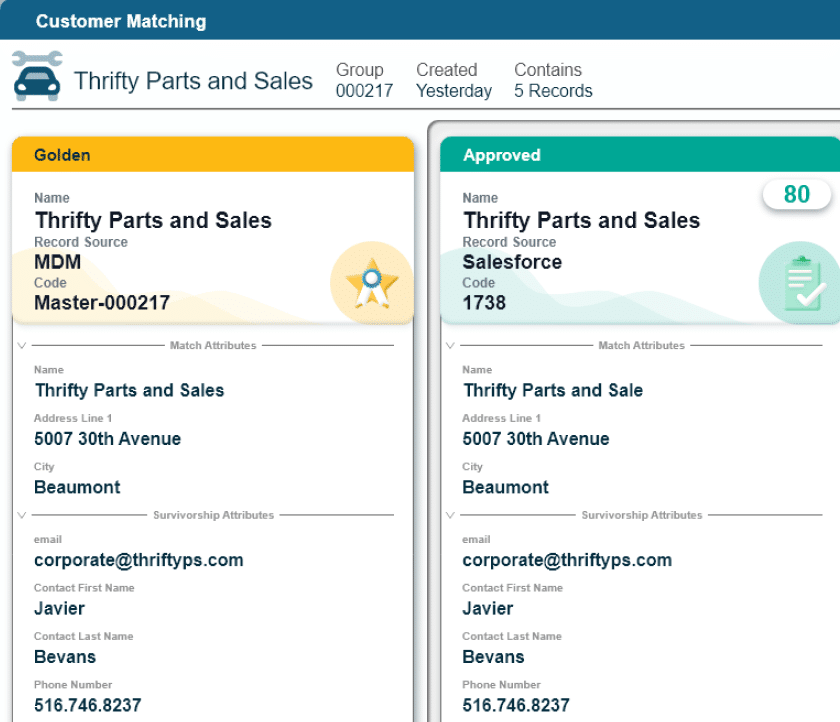

Realizing that one version of the truth from wherever the data resides — in legacy systems, data lake, cloud or other data storage — really is at the heart of master data management. Integrating and matching data with the relevant business rules and governance to create the Golden Record is the link to enabling trusted, high-quality data, which is foundational in arriving at one version of the truth for any data element.

Futureproofing with Trusted Data and Modern Applications

Yorck was quick to note that while organizations often look to cloud storage and applications when modernizing their technical infrastructure and data management, it’s important that organizations do not simply try to be “cloud-first” but also “cloud-smart” and use the project to generate quantifiable business value.

Don also mentioned that any digital transformation or data management project requires trusted, high-quality data to be successful: “Garbage in, garbage out. If you don’t trust the data then it’s not going to resonate and it’s not really going to have any impact. [Data quality] is foundational and a necessity.”

Yorck emphasized that the veracity and trustworthiness of the data are key for anyone to use — because if they do not, then the value is immediately lost. Farmers Insurance is focused on explaining to business users that any data problems must be investigated “upstream” where the data is entered. Working with business users to start with reporting, outcomes and business challenges in mind can help solve many data quality issues later.

Louis spoke about data governance in the context of resource utilization and constraints in that an organization only has a finite number of data engineers and data stewards who can manage the data.

“Are you going to use them to create a new project for a business sponsor to deliver value back to the organization or are they going to be focused around continual data quality associated with a data governance project?” Louis said.

Chief Data Officers need to convey the benefits of both types of projects to ensure continual support and buy-in from business sponsors.

Measuring the Business Value of Data Quality Initiatives

This CDAO panel was aptly titled “Maximizing Business Impact by Understanding the ‘Why’ When Building Your Data and Analytics Strategy.” The panelists and I touched on this theme throughout the session and shared why it is so important for CDAOs and insurance executives to accurately demonstrate the value of their initiatives in terms that resonate with the business.

“If you want to show value, you need to know where you start and you need to have some sort of measurement criteria,” Yorck said. “Even if you don’t have a target, you at least need to have a baseline and need to be able to measure the trend or the progress you’ve made. In some areas, it’s very easy, you can translate it into dollars, i.e., are we [our initiatives] adding to growth, how many more policies are we selling, how many more customers are we helping [the organization] gain.”

And Louis reiterated that the key to demonstrating the CDAO’s contributions to the business is working with trusted, high-quality data: “Your cleansing steps [makes] a huge difference. You see through your modeling capabilities, the difference of not doing anything vs. adding those additional data elements and those cleansed data elements. So, the lift associated with having the additional data elements vs. not, I think that’s a big area to register the value increase.”

The other area of value that Louis highlighted was “time to data”. “When I first got to this organization, it could potentially take upwards of over a year to put together a good dataset for the modeling organization to be able to view their efforts around pricing and risk aggregation,” Louis said. “But what we’ve been able to see now is that essentially we built a factory [data factory] where data is being refreshed on a monthly or daily basis — so now access to good data immediately versus that year that it used to take, there’s definitely value associated with that. That time to market definitely has benefits.”

How Data Management Can Manage Risk / Wrap-up

Finally, the panel discussed risk management in the context of a Customer 360 model where data and analytics could be used to stop fraud and mitigate risk. Louis gave an example of a large claimant that had been dropped who tried to get insurance again from a treaty with a different carrier but their data management and identity matching around the Customer 360 allowed them to identity the claimant, deny a new policy, and thus eliminate the unnecessary risk.

I appreciate CDAO Live giving me the opportunity to discuss something I am very passionate about with this panel of experts. And I encourage you to watch a recording of the event to get the full experience and hear all the insights Don, Louis and Yorck had to offer.

Harbert Bernard

Global Value Consultant Lead, Profisee

Harbert Bernard leads Profisee’s Value Management Practice, which works to develop BIRs (Business Impact Roadmap) for a range of enterprises at no cost to the organization. He is an experienced management consultant with deep domain expertise around developing business cases for large investments in technology. He understands the transformative power of MDM and is passionate about helping customers succeed. He believes the BIR approach is the way to start the journey.

Harbert Bernard

Harbert Bernard was the Global Value Consultant Lead at Profisee.