Episode Overview:

Episode Links & Resources:

Good morning. Good afternoon. Good evening. Good. Whatever time it is. Wherever you are in this amazing planet of ours, I’m Malcolm Hawker, the host of the CDO Matters podcast.

We’re joined by our returning champion, mister Samir Sharva.

Yeah. Exactly. His his second time on the podcast, maybe his third. No. I think it’s only your second.

Is it my second? I cannot remember.

I think it was I can’t remember either.

Yeah. And I I keep having Samir second, third, fourth. Who knows?

Because we’re both old phobias now, and our memories are fading.

Right?

Every time I can have Samir on the podcast, I’m happy to do it. He and I obviously get along very well. We got a great chemistry. We love talking about data and all sorts of things. We’re gonna talk about the year that was twenty twenty five. Although you’re probably gonna be watching this in early most certainly, you’re gonna be watching this in early twenty twenty six. Haven’t quite figure figured out our schedule yet in terms of publishing episodes.

We’re gonna talk about the state of the union, the state of AI.

We’re gonna talk about other fun things that you as a data leader or a data practitioner will find useful, I hope. And hey, Samir, congrats on publishing your book. Thank you very much, Malcolm.

Tell me tell me all about your tell me about your book, and then tell me why you brought finally did it.

Well, look, you know, I’ve I’ve I’ve just had a whole bunch of books go out, actually. Yours is on its way because you were very kind enough to review it, help me with it, and gave me a nice endorsement.

So this is still my old this is my old not for resale copy from It like a proof copy, yeah.

A proof copy. Because every time one of the books, then I have to ship it out. So, you know, I’ve still got this. But, you know. Yeah. So here we go.

Why did I write it? That’s the question you asked me. Right? Yeah. Yeah. So I’ll tell you what.

It yeah. Go.

No. No. No. That that I mean, why why did you write it? Why did you Yeah. What what drove you?

What was what was, you know, leading to this great I I think I think over the look.

I and by the way, thank you for having me on today, again, for the second or however many nth times it is.

So it’s always great to chat with you, Malcolm, and it’s always, I think, wonderful to speak about our industry and so on and what’s happening in it. Now, over the last twenty five years, over two decades of working in the industry and both on the client side and on the consulting side, Way back in about twenty fourteen, and I talk about the story in the book, I walked into a meeting. It was one of the first clients that I had when I created DataZoom, launched DataZoom back in twenty thirteen. In twenty fourteen, it’s like, woah, know, I’ve got my first client. Wait.

It takes a bloody long time.

And I walked into this meeting and basically what I saw was just mind boggling, right? I walked in and on one side there was a bunch of data architects, enterprise architects, IT people. And on the other side, there was the operational and commercial executives.

And in front of me was a big projection of a physical data model.

Now, you can imagine the minutiae on this physical data model being shown to these very people who are sitting there quizzically looking at it, squinting through their glasses or whatever, trying to work out what this person is telling them about relationships and entities and blah blah blah. And you’ve got all these very, you know, happy and ecstatic and excited tech guys and data guys on one side. And you’ve got these really confused business people on the other. And in that moment, when I heard one of the execs basically sort of shout out in a way that was, I’ve had enough of this meeting. Just give us all the data and we’ll do what we want with it. I knew that there was, you you and I know when you hear that, that’s a big problem, right? Yep.

I basically stopped the meeting. It was second or third day I’d been in that client. And literally I walked in and they thought, who is this guy?

And I said, let’s stop, let’s postpone this meeting for another day, probably for tomorrow if we can all get back tomorrow. I will find a better way to do this.

And I thought to myself, what am I doing? Why am I saying that? Am I insane?

And I walked out of there and I I immediately got into I I booked a room which had floor to ceiling whiteboards and whatever and started sketching my ideas because I had a I had a an inkling that in there somewhere there was an answer.

And I thought to myself, if I can get something down today in a graphic image that I can then present tomorrow in that meeting as a better approach, maybe we might be able to get somewhere. So back then I was doing a data and BI strategy, yeah, for a large logistics company.

And what started forming in my mind is the obvious thing which you and I have both been working through for many years is actually what is the problem statement? What are we trying to identify here that we then need to work out and provide a solution? But not only with that, what I wanted to really understand is if I am identifying this problem and I can provide you with a solution, what I want to understand is what are the business questions that you’re answering and what are the decisions that you’re going to make off the back of those? And so if I could map that all through from problem statement, from strategic objective to problem statement through to decision, through to business question, through to the person that’s to be affected, who’s going to be using this stuff, etcetera, and then the data, I could probably work out a really you know, targeted way, laser focused way of actually going and getting the data I need and answering those questions that I need to to make sure that we can drive those decisions, capabilities, etcetera, and to deliver on that strategic objective.

Well, I thought, okay, by the end of the day, I’d had this thing shaped out and it was a canvas of sorts. And it was the first time I’d ever drawn anything like that. I think I had it in my head.

So I went into the room the next day. I said, look, we all were talking at odds yesterday. We were looking at a physical data model, which didn’t make sense to me. No one should be, you know, exposed to anything like that. It’s a horror.

You know?

It’s like Harry times ten. You shouldn’t be exposed to anything like that if you’re a business person. And no doubt that’s why they got frustrated. So I beamed up the canvas.

And, it was just the first blows of this thing that was forming in my head. And I said, look, this is how we’re going to do it. We’re going define what the actual problem is. We’re going to work out what decisions you need to make off the back of it, what business questions you’re answering, who’s this for, what’s the kind of focus in terms of strategic objective it’s linked to, is it a revenue thing, is it a cost efficiency, is it a risk, is it regulation, etcetera, compliance stuff.

And so we actually mapped this thing out just with one particular thing that the business and commercial teams were trying to focus on. And basically in that moment, every single person sitting on one side of the room and on the other side actually came together. They were talking about a business objective that they were understanding clearly that had a common language. It was scoped around value in terms of what are we trying to achieve and linked to that strategic objective so we could quantify it in some way.

And therefore, I think there was no talk of this whole technology first thing. There was no talk of conceptual physical data models or you know, anything like that. There was no talk of let’s just stand up a data warehouse or, you know, we’ve got this huge big data lake that we want to chuck stuff in.

That’s where the canvas was born.

And that’s when I realized, oh, actually there’s a decent way of doing this and maybe this is one of the ways that we should be trying to do this stuff. So as I started to build it out, it started to get momentum and people started to say, this really makes sense.

And it’s actually given us a way that we can speak to the technical people. It’s given the technical people a way to speak to the business people. It’s taken the edge off that whole hype cycle of, you know, we just want to go out and buy the latest shiny tool. And it’s got a value focused conversation around it which actually then helps everybody understand the purpose of why they’re doing it and gives them that north star of where they’re trying to get to.

So I’ll stop there because I think I’ve, you know So the data strategy canvas is the canvas you’re talking about.

That’s the focus of your book. And I love the origin story. I love it. Yeah.

It’s it’s funny. You know, I’ve I’ve had many of those galvanizing moments as well. I I shared a few of those in my book, but it’s it’s interesting that twenty years later, and in in my case, often even closer to thirty. Yes.

That that I can still trace back to that one time in that one room.

And I’ve I’ve I do this two or three times.

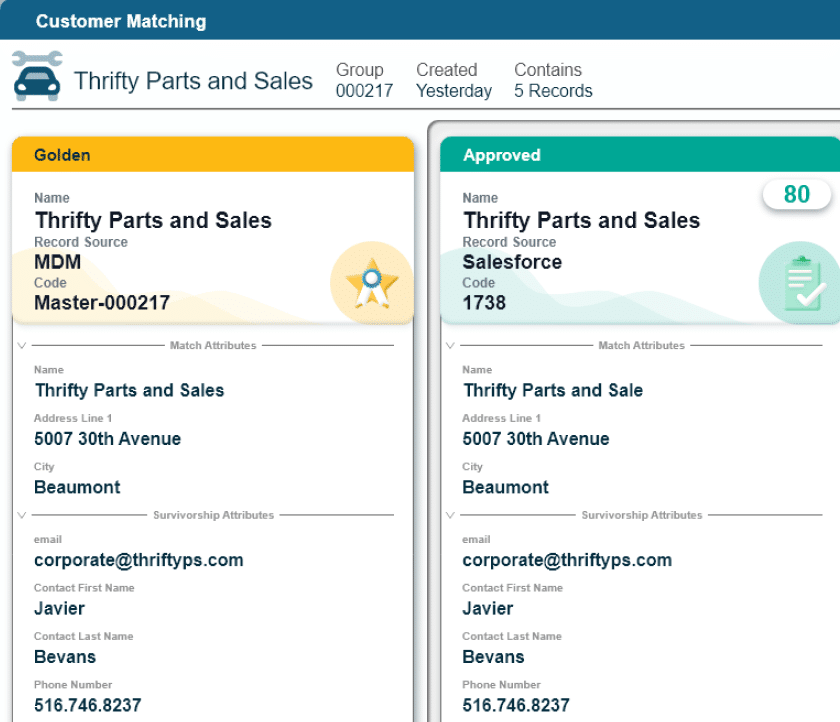

I I I know when I fell down the, in my case, MDM rabbit hole because I got asked a question by a CFO of how many customers do we have? And I laughed thinking it was the easiest question I would ever answer. Turned out it it wasn’t.

But getting back to getting back to the canvas, one of the things that I really like is that it is very clearly outcome oriented. Right? Yes. In your case, it’s what are the questions we need to answer?

Right? What are the outcomes that we are trying to drive? Yeah. And work backwards from there.

Then if you put all those answers together, right, if you get all of those things defined, well, there’s your strategy.

But I think that that I do think that’s a little different, Samir, because I’ve worked with and paid a lot of money to the Deloitte, Mckinsey’s, Accenture’s, Ian Wives Right.

Of the world where they don’t start from that. What they start from is the overall business strategy and where we sit down and figure out, okay, how are we best going to align to the business strategy? And they and they kind of, they they eventually get to those outcomes, but that’s not what they’re leading with. Do you understand what I’m saying? Where where I where I’ve seen more of the kind of the top down focus.

And I’m a little concerned about that because that’s exactly, that top down focus and strategy first, outcome second. And and they should be of course, they should be married.

There there should be. Yeah. There should be They should be completely. What I’ve seen joined at the hip.

Yeah.

But what I’ve seen often is that strategies end up being this conceptual thing Right.

That that are that are conceptually interesting, strategically interesting, but don’t necessarily actually tie to the way that the biz op business operates.

Yes. Yes. And And I think that’s the, you know, let’s just go to the title of the book. The title is The Strategy Canvas. Yeah. A Field Guide for Data and AI. And actually, this is the key bit here, closing the strategy execution gap.

There you go.

Yeah. That’s the bit which I realized was just so yeah, just a big massive gap. People would have this lovely slide where they would have beautifully beautiful pictures designed overnight. You know, you could see they’d send a rough deck over to their media team. And overnight, this media team would come out with this amazing slick presentation.

And that would, you know, it was slideware ready. It was board ready. It had all the lovely bells and whistles. But actually, one thing you do with it was actually execute it.

Know? And mean, I’ve been on that side where I’ve worked in the consultancy. I’ve worked in one of the big four, and I’ve seen that happen.

You know? And like you said, it’s very nice to have that stuff. Yes, it’s great. You know, it looks slick. But actually, if you can’t take that and create a pragmatic roadmap out of it and then be able to deliver that in a way that is methodical but yet practical and people understand why they’re doing it, then what’s the point of spending three hundred and fifty thousand pounds or three hundred and fifty thousand dollars on a slide deck of three fifty pages? I think you can get the answer in terms of the cost per sheet of that particular PowerPoint.

And that’s the reason, right? So I think that’s the reason I focused on that strategy execution gap. You and I have spoken before. The strategy execution gap comes from the underlying understanding of how you then take the strategy and pivot into an execution phase and But also it’s about how your operating model changes when you actually have to execute. I think that’s one of the things that many people really forget. So the thing about the canvas and one thing, you know, going back to the origin story in twenty fourteen, of course the canvas has matured over, you know, that period of time from twenty fourteen, actually till last year. Because it was at about the end of last year when I looked at the canvas and I adapted it yet again after the umpteenth time, I think it was probably twenty, you know, the twenty fourth or twenty fifth iteration, I should really, you know, be better at keeping track of the versions themselves.

But, you know, every time I make a change, it’s probably sometimes even on the fly in a client because they’ve just said something and I’ve realized it needs to have that addition. But when we got to the whole, you know, when thirtieth of November twenty twenty two came and we had the acceleration of AI purely based on the LLM, large language model and purely based on, you know, chatty PT, I realized that we had to change the formation of the canvas. And so I looked at it and I said, I think we’ve got to adapt it. We’ve got to look at it because everybody’s going to be running off and saying into the hills saying, oh my god, I’m using AI now. But actually we’ve been using AI for years. People have been using it forty, fifty years.

The Gen AI bit just exploded because it was easier for the layperson to actually just put something into the UI of OpenAI, of Jack Chickity or Gemini or whoever.

You know, tell me about so and so.

Or, you know, find out about this particular subject. So it was really easy.

And so I thought to myself, I’ve got to change it. Because of the iteration it got to, it didn’t really It had the advanced analytics component in it, but it didn’t morph into the whole risks and ethics part of it. And it was missing, I think, what it has to be considered more of the sort of the operating model and governance piece that actually has to be stretched into. So it then, you know, at the back end of last year it got to a point where I thought to myself, hey, do you know what? I think I’ve got something here. I think I’ve got something because I tried to write about it in twenty eighteen.

I tried to write about it again in twenty twenty two and it just wasn’t coming to me. It wasn’t right.

But off the back of last year and off the back of, you know, a little bit more of the maturity around AI and so on, I came to the realization that I actually finally had something. And it was a sounding board that I did on LinkedIn where I said, look, I’ve updated this. I’ve looked at this through the lens of not just advanced analytics but what AI might hold and all of the components that we might be thinking about. I’m not going anywhere near the shiny new toy syndrome or anything like that. I’m staying within the core ethics and the focus and the framework of this. And so I thought that the best way to look at it is through four lenses.

The strategic objectives, which you should start in with the why and that’s fine, right? Strategic framing, which looks at the key decisions and use cases, the AI and analytics opportunities, the value measures and ROI and cost benefit.

Strategic enablement, which covers data requirements and products consumers and users technology and systems. So you can see that we’ve got not we’re not starting with technology and systems first. That comes down the road because we’re actually starting with, as I mentioned earlier, what are those decisions? And then the final fourth lens is strategic readiness, which is portfolio management, operating model and governance, and risks and ethics. So I thought to myself, and then I called it the data and AI strategy canvas. Because before it was just a data strategy canvas, which was fine for what it was being used for.

But then I realized that actually now it’s morphed into something that makes total sense to anybody reading it. And actually anybody could just take it and run with it and work through it.

And luckily for them in the book, I’ve also given them a worked example. So it does take the sort of guessing out of the game, so to speak. But I think those four areas did it for me. That’s why I also wrote the book because I realized I’d come to a point where now I feel more comfortable getting it out there.

I had some stumbling blocks before and I just wasn’t happy with it, that refinement last year was probably what pushed me to do it.

Well, if you are a data leader and you are concerned about your tenure or you want to solidify your tenure, you want to do better at your job, you want to deliver amazing results, you should check out the data strategy canvas or the strategy canvas by mister Samir Sharma. I love Thank you. The execution focus. I I you could argue. I think you could argue very compellingly that the number one challenge to CDOs today is the inability to execute. And that’s why CDOs are losing their turf to CIOs, losing their turf to CTOs when it comes to AI. They’re losing their turf to CFOs even, I’ve been hearing.

And the reason is because accountability will go to those who can deliver.

Right? Yeah. It just it just it just is. Nature abhors a vacuum, and if you need to deliver and if you are not, then you’ll lose responsibility.

It just it just how it it is how it is. And sadly, for many CEOs I’m seeing being relegated into purely governance centric only roles. And I think we can do a lot more than that. I think we can do a lot better than that.

You know, you talked about maturity. You talked about execution. You talked about a lot of things. I know you touched on a lot of them in the book, but I can’t stress enough just focus on execution, focus on the delivery of value, focus on doing stuff.

Right? And make your scope as small as you need to. Right? Make make do whatever you need to do, to deliver that value.

That is always going to serve you well.

Anyway Agree.

Agree. Absolutely agree. Huge huge accomplishment. Thank you. Huge huge Thank you for helping me as well.

Well, of course.

You know, having just kind of come off Yours. Writing your first book myself, I I know that it is a big achievement. I know there’s a lot of sacrifices that need to be to be And and I’m I’m more than happy to support you and other smart people on LinkedIn because I think I think there’s I think there’s a few of you out there. There’s a few other Samirs that have have kind of figured things out and have good things to share.

And that’s why I’m happy to promote and have conversations about it.

Thank you. But anyway, you talked about AI, you talked about maturity, you talked about your own maturity, and kind of the evolution of your maturity.

I’m I’m a little perplexed on that one though, Samir. I I want to say, I I really, really, really want to say that the average data organization is has gained and is now sufficiently mature, for lack of a better word, when it comes to AI, but but I I’m not sure I can say that with certainty. I think many of us continue to really struggle around what does it mean to operationalize AI at scale? And these numbers bear out. Right? It’s the ninety five percent of POCs failing. It’s it’s it’s the fact that most Gen AI is still happening just at the desktop.

Over there’s there’s a very, very few of us that are doing actually any sort of customized implementation of Gen AI, like using complex rag models or vector databases, knowledge graphs, the stuff that are, you know, the the stuff that a lot of people are talking about online, we’re talking about it, but very few are actually doing it. Do you think what do you think is causing this gap between kind of the the promise, the potential, right, and what is actually happening on the ground in a lot of data organizations? Because there’s there’s certainly I think there’s certainly a gap there. Sure. What what’s what’s causing that? What what what are you thinking about?

Let me just go back to what you mentioned earlier, just about maturity. Now, I talked about maturity, I talked about it didn’t necessarily mean that organizations have matured to the point where they can do AI.

What I meant is that my thinking had matured around the work that I was doing and then working with particular clients who had helped me to develop the So when it comes to maturity, the maturity factor, you and I agree on that. There are very few that we could probably pinpoint very few people who have actually done those things right and they doing the right things, if you see what I mean. Yeah.

So I think the challenge for many is an old architecture problem, right?

Now if you think what’s happened over the last twenty five years, we went from, actually probably not even twenty five years, forty years, okay? We went from green and black, you know, black and green screens where we actually had really good, and I say really good because I used to do that programming. You know, I started off programming in the COBOL environment which many people don’t know. And I did it in the aerospace and defense industry.

And I was using the RPG400s and, you know, doing Unix as well on top of that. So I think we had fairly good structured data back then, to be fair, right? I could go into my second job in industry where actually where I fell into data after leaving programming, which I did for about three years, I thought, you know, I’ve had enough now. I need to get out of the front of a computer and actually work out what the hell is going on in the front lines.

And so I took that opportunity.

And as I started to realize the things that I still in an environment where we had black and green screens. There was an IBM, you know, machine which still ran on Cobalt. And I could get my team to extract data fairly quickly and then we could work on very rudimentary segmentation, but we could still do it, okay? We still had to use, you know, two thousand and two old Excel models and, you know, that’s how it was.

But we could do it. We had access to a few tools from IBM but very, very few.

Then what happened, I think, is we started to see the advent of things like CRM.

Okay?

And that then started to provide the context for everybody around the organisation to put data into systems, which was a really bad thing in my opinion.

Because what happened, there was very little understanding of what you should put in. And also back then, the first CRM tools, and I remember working on a whole heap of them, tools like Siebel, really didn’t have the right rigor on the front end validation, you know, the right dropdown lists and so on and so forth.

And I think then what happened is that spiraled and then we got into the whole ERP mess and then obviously the data warehouses. Data warehouses started coming alive in lots of organizations, not to say that they weren’t around, but they became more prevalent.

And so I think we then started to have this proliferation of data that we thought was coming from good sources and going into this data warehouse, but there were so many transformations that needed to happen, so much validation at the bottom end and downstream towards the warehouse layer. And we didn’t have anything upfront. So people were shoving in bad data and we were trying to make sense of it down at the downstream end when it got into the warehouse so that we could do how many customers do we have in the organization.

And then I think big data came along.

And that then threw everything out into, hey, we can just chuck everything into Hadoop, right, into a nice file system and there we’re going to chuck all of our data into it and then we’re just going to work out what we’re going to do. And I think then the whole architecture became a nightmare from there on in if it wasn’t before. And there wasn’t proper data modeling going on. There wasn’t real data architecture principles that should have been there.

And I think what happened then is we started buying more and more systems. Cloud came out. And we started to have all of these cloud, know, SaaS models, these products that were there, then all of that data needed to go somewhere. Suddenly, you’re saying, well, now we’re going from on prem into cloud or we’ve got a hybrid model and we’ve got data everywhere.

It’s not well architected. It’s not stitched together properly. We haven’t got the right modeling in place. But you know what?

We don’t need modeling because it’s old school. You know, we don’t need to do that stuff. And so we forget about it. And so we’ve got, you know, people trying to stitch this stuff together in SQL and it’s based on what?

Then we had the BI tools up front. No. Do the modeling in the BI tool because now we can do a DAX data model and, you know, Power BI can do blah blah blah. Or you can do it in CLIP or you can do it in Tableau so you can just go and, inject data from Excel spreadsheets.

So, we’ll put it all into Excel spreadsheets. I think everything went. It’s sort of like a wild west.

And therefore, when you looked under the covers and you tried to work out what on earth is going on under here, by that time it was Spaghetti Junction. And I call it Spaghetti Junctionitis because I just think we’ve got ourselves into such a place that it’s become a huge mess. And we can’t get ourselves out of it because, again, we’ve just layered systems one on top of each other. Everybody’s got their own database. Everybody’s got their own data model. Everybody’s got their own way of doing something. But we’re still trying to conform everything into a data warehouse.

And therein is the which I think has led to the point today where people are saying, hey, hang on, haven’t you, Mr. Head of Data or CDO or whoever, been working on data quality for the last and governance for the last fifteen Well, yeah.

Haven’t you Yeah.

We’re still saying we’re not ready for AI.

Right. Right. So and I think it’s fundamentally some of the way that things are stitched together. And we’ve had this collapse of of purity in terms of how we actually do these things.

You know, if you look at the Bill Inmans and, you know, the Joe Reese’s and so on.

Highly tribal. Yeah. Yeah. Exactly. So, you know, I think we’ve got to come back to certain principles and say, what are we doing, guys?

And why are we doing it? And, you know, we can go out and buy AI tools again, but what are we going to do? We’re just going to lay that over this spaghetti junction that is not optimized and, you know, going to be structured right for how it’s going to be consumed. Of course, you can put AI on top of bad data.

Of course, you can and you can get some value from it, but you’re not going to get the extreme value. And I get that. I know that people say, Oh, yeah. We’ve just got to do it and chuck it on top of it and see what comes out.

Fine. But it might be twenty percent good. Maybe that’s still good enough, right? Right. You don’t have to have one hundred percent accuracy.

But I still think we need to get our data architecture sorted out, our enterprise architecture sorted out because it’s a complete mess. Everywhere you go, everybody’s got the same problem.

Well, not to mention that everything you just said is couched in the, what I would call, the world of measurement, and AI exists. At least Gen AI exists in the world of meaning. Right? Yes.

And they’re two very, very different takes.

One one one is a story.

Right? Gen AI wants a story. Right? Any prompt you write is a little miniature story. Right? With and the better the more context you can provide and the more the more anecdotes you can provide and the more depth of that story, the better the response is gonna be.

Everything you just described is all about the intersection of a row and a column. Right? Yeah. Yeah.

Correct. Yeah. Yeah. Versus the world of measurement. And and we’re trying to cram these things together.

So not only do we have the architecture that you described, which is absolutely positively correct Yeah. By the way. Yeah.

Only do we have that, we’re trying to take that square peg and cram it into the round hole that is Gen AI. Yeah. That that, I think, answers my question in in ways. Not all Not every way. I think that there are behavioral challenges here. There are mindset related issues here.

There are There’s this whole heap of factors.

Overly conservative. Right? And which is, I think, is amplified in in Europe as compared to the United States. But but that aside, there’s a whole bunch of things. But what you described is this we’ve got this old architecture that was maybe maybe slightly optimized for the task at hand, which was building the dashboards. Right? But but your your point is well taken that even then, perhaps not, because this broader ecosystem of these systems of record Like CRM systems and ERP systems existing in their own silos and on and on and on.

We’re trying to take that, and we’re trying to make it do something that it was never designed to do.

But that gives job security. Right? Like, all these things I look at all the stuff related to all of these rags and dogs and dags, and I I see these as, like, hacks.

They they seem to be hacks.

Will be, though, won’t they?

Well, they like, to me, they and I’m not saying they’re not valuable. Right? I’m not saying that that, like, feeding a knowledge graph into a prompt to improve the the the accuracy of of a response from an LLM. I’m not saying that’s bad.

I’m not saying we shouldn’t do that. Of course, we should do all those things. But I kinda see that, like, back in the day when you had to put an IP address in the in in the in the address bar of a browser. Right?

Before there was DNS. Right? Before there was, like, you know, resolving to to to to, you know, web vanity web names, you had to actually put in an IP address.

I do see a world where, I I think, maybe, that somehow, some way, our systems of record adapt to instead of capturing transactions, they potentially start to capture stories.

Meaning Like Yeah. No. Actually, narrative. Like like, literally a narrative. Right? Like Yeah. Like a a time series narrative where Samir logged in ten fifty five PM.

Samir clicked on this. Samir masked over this. Samir bought this. Samir watched this. Like, where we’re actually I I see a world maybe.

I don’t know. I I I could be crazy. But, yeah, the ongoing conversations around, like, architecture and and OLAP, OLTP. Yeah. And all of that.

Look. I, you know, I I think I think we you’re right. We’ve got two worlds colliding. Yeah.

And the the challenge that we have in in our industry, in our community is that you have tribes. Yes. Right? Yeah.

And those tribes of people all posture. No. I’m right. No. You’re right. No. I’m right.

No, you’re never going to be right because I do it my way. And so you’re going to have your people who are into your ontologies or your semantic layers or your modeling or whatever it might be. And everybody is just gunning for their own portion of I think it’s time for us to say, Look, we’re going to do the same thing again now for this old gen AI and whatever is going to come next. So, what do we need to do as an industry to move beyond just saying my knowledge graph is better than yours and working out exactly what Yeah.

How we need to architect these things for what you just said for the old world, which was that measurement, which still, I guess, will still be Of course it is.

Yeah. Of course.

And for this whole world of context and meaning and storytelling and understanding of the real world.

And I think those, you know, they’re obviously not gonna come together right now because they can’t.

And I think until we all decide upon, you know, and stop arguing and stop having these conversations on LinkedIn where is, know, the big echo chamber that it is of my data warehouse is bigger than yours. Well, come on. You know, I think it’s time for us to look at actually what we’re doing to the businesses that we work in and say and also to go back and say to the vendors, stop the hype.

You know, just because AI came out, you know, in twenty twenty two, don’t slap AI on your on your product.

Don’t slap it on there and say that you when when you know that underneath, it’s the same old product that we’ve been using. Next year, it’s going to be quantum. And someone’s going say, now I’ve got a quantum semantic I mean, Jesus Christ, you know. Are we going to do that? Of course. We are our own worst enemies in terms of the way that we talk about how we want to be so relevant in the business, but yet we we do all these things to to make the business think, are we are we really gonna depend on these guys?

Well, it’s interesting. When you were telling your your when you were telling the kind of the chronology, all of the disruptive events that seem to, at the time, be fully justified and maybe even wise. But now in retrospect, when we look at them, we’re all highly disruptive and maybe not as wise as we may have thought.

And they’re all technology based. Every everything that you Yeah.

Everything is. Hey. Hey.

New CRM system. Siebel okay. First of all, capturing stuff in in in COBOL and AS four hundreds and okay. Yeah. Writing custom portrait. And then then these, you know, user centric applications came along, and we allowed salespeople to start doing crazy things in Salesforce. And then it was the data warehouse and and and they’re all technology driven.

Where you ended up was asking the question of, can we take more of a first principles approach? And if we were to redo this from scratch, which sounds like a very scary thing, and I don’t think anybody would necessarily do that. But as a thought exercise, think it’s useful to say Would we do the same things the same way if we if we were to start from scratch? For example, here’s here’s a thought exercise. I’m not saying don’t do this. But would if I was starting a company from scratch on day one today, would I have a data warehouse?

And and this it’s rhetorical, but but I’m not entirely sure I do it.

It’s a good question. Now you can do that, right? Mean, okay, in hindsight and all that kind of stuff and you can say, do I really need to architect it around something like that? Or do I need to have, as you said, the systems of record be much better in the way that they are established and probably speak to each other?

Maybe that’s a better way to do it. Or look at the processes and fundamentally architect everything around processes. Or look at, actually, do we want to make it a decision model and make everything around all the decisions we make and therefore underneath that are going to be processes? And therefore underneath that is going to be a layer of knowledge.

And underneath that is going be information and data and blah blah blah. So we can do that now. Why didn’t we do that before when we had DIKW and all that kind of stuff for years upon end? Oh, my beloved DIKW.

I I love I love the DIKW framework.

It’s been on almost every presentation I’ve given over the last six months. And if you don’t know what information knowledge wisdom pyramid is, just search D I K W or come to one of my presentations in early twenty twenty six because there will be a slide.

But but I think I think the challenge is is that for, you know, a a a studio who is under extreme pressure to deliver on AI, deliver something, under extreme pressure from What does that mean, though?

What does that mean? Well, You see, this is the thing. Right?

Somebody has a checkbox, and we’re we’re using it on the desktop.

But but But why isn’t there pushback? Why isn’t there somebody saying you know, so I I got a I got a ping this morning from a group chat I’m in on WhatsApp. And the person said, my CEO just came back from a conference and now he wants to do AI, everything.

So suddenly, I’m you know, that person is saying, what is the conversation I need to have with them? And I said, okay, you need to have this conversation. And at the end of it, I did say, you need to buy them this book because that will give them the the the actual context of why they’re gonna make the mistakes that they’re doing. You know? And and it was you know, I I she knows I’ve written a book and and and that’s fine. And but I did say, it’s probably one thing that you could do is just ask them why do they want to do it, for what benefit for the company, and why is it gonna give them?

Is it gonna give them some magical, you know Yes.

A simple question to ask. Right? I think that’s a simple question to ask. And and we can be the yes, comma, and people instead of the no people. Right?

And and I believe that that’s the right Yeah. We should never say no. Absolutely. Right.

Yeah. But you’ve got to you’ve got to challenge it in some way because Of course. Everybody is and you’ve seen you’ve seen what the MIT came, you know, came back with ninety five percent. I don’t know if you actually saw this yesterday, but I’m gonna bring it up very quickly.

But there was an article yesterday, which actually I should have sent to you and I will forward it to you later. In it, it basically states, Microsoft scales by AI goals because almost nobody is using Copilot.

And I’m going to forward that to you.

Interesting. Interesting.

And it’s got Satya Nadella’s face on it and they’re looking at halving their sales on this kind of stuff. So, you know, market versus sales ambition is basically being cut in half. Now, know, so we and I think I did it in a post the other day. What are the three terms that you want to get rid of?

And the the first ones the heaviest one and then the most most voted for was AI first organization because there aren’t going to be any.

That was my vote, by the way.

I know. I know it was. You know?

And so we come around to all of this stuff saying, how can we do it better? Yeah.

What what we’ll never be able to do it better if we don’t actually manage this The the the the the trap here, right, is that in ten years from now, if you and I are looking and and still doing this podcast ten years from now, and and and Samir’s next chronology, it will be it will go from, you know, big data to Hadoop to misguided digital transformations Yes.

And it will be AI first organization.

Yes.

And we’ll be talking in twenty thirty five about, you remember when everybody did AI first? And then I’ll repeat myself and say, well, look, we just fell into another technology trap, and that’s what held us back for the next fifteen years because we thought it would come up with AI. When lo and behold, it should have been about business process reengineering. Or it should have been about business outcomes. Or it should have been about execution, all the things you were talking about before. Anyway, just to get back to the the kind of the first principles thing. Yes.

Yeah.

As a CDO, I’m I’m very intrigued by how do I and, again, maybe a little bit of a thought exercise, but I think you can start to put a little bit of meat on this bone. Where could I as within my organization, I’m still doing the dashboards. Right? I’m I’m I’m I’m still doing governance and quality and all of those things.

But could I start could I carve out a little chunk of my organization? And this is gonna be hard because we’re under increasing budget pressure. We’re losing ten percent of our budgets every year and blah blah blah, and I know this is gonna be hard. But can we can we take a small like, the small, tiniest, micro little use case and start to apply some of those first principles.

Right? Start to apply some of the critical, like, and reengineering and re architecting with a really small little piece of the pie. Maybe it’s a one small business unit or division. Maybe it’s just one use case.

Maybe it’s who knows? Right? But can we start to look at things differently? Right? And and at one use case.

And I’m not talking about, you know, deploying an agent in your CRM. Right? Right. Yeah.

I I get it. Yeah. Yeah. I’m not talking about that. I’m talking about, like, rearchitecture, process reengineering, role reengineering.

Right? Like, looking all of these things.

I I I think look. I I think we’ve got to we’ve got again, to wind it back to why are we doing this stuff.

Of course. Yeah. Yeah.

Right? Because we could go in and do a big process reengineering piece of work. But if we don’t actually understand the direction that we’re heading Yeah. Then that business process reengineering will go to the wayside and it will be, again, another failed initiative.

I think you’re onto something when you say, let’s take a very small, narrow slice of a use case and dedicate a small team. Of course, you can do that. Why not? I do that all the time with clients.

Right? Yeah. The thing is you’ve got to be able to show the wider to the CEO, to the C suite, to the board. You’ve got to be able to show that value piece first, right?

Yeah. And I think value early, value fast, and break a few things but apply that sort of sentiment and get things going. But again, be focused on a very narrow use case and try and get that iterative view of that mindset and change, right?

If you can provide, and I’ve seen some of my colleagues do this. I’ve seen people I now work with in terms of DataZoom where they used to be in very large organizations and they would be saying, right, mister CDO, you have no money this year.

What are going to do? Yeah. Right. I’m going to prove out that I can that what I’m going to build here is going to generate us five million dollars five million pounds. If I can do that and I can generate that, will you give me percentage of that five million quid so that I can then reinvest in what I’m doing and I can start to create more value? And lo and behold, one of them actually, as he started to do that, in the end created something like one hundred million in terms of So if you have that principle of thinking that way through a commercial lens, And I know people who are doing that now in in CDO positions who are doing it really well.

They’re not being you know, they’re not being handcuffed. They’re not in straight jackets because they’re thinking about governance all the time. They have broken out of that, and they’ve said, I need to focus on what the CEO wants to do. And if I can talk that commercial language, if I can show them that we’re going in the right direction, I don’t need to show them that I’ve built an AI model or a segmentation model or or, you know, a recommendation engine or whatever it might be. They just need to see the outcome.

And if I can show them the outcome has got dollar signs or pound signs or euro signs or yen signs, well, great.

But if I show them that the outcome has got a new bit of data in the data warehouse Nobody cares. Or I’ve just bought Microsoft Azure or I’ve just bought Fabric or I’ve just bought Databricks or whatever it is and, you know, I’ve configured everything and it looks lovely in libraries and so on. Well, they wouldn’t care less about Nobody cares.

Nope. Nobody cares. That’s that’s that is that is the ERD on the presentation in that boardroom in twenty fourteen.

The origin story of the dating strategy canvas Yes. By the sharma. With that, my friend, we are at the top of the hour. We need to roll.

Yep. Yep. Go go to Amazon. Check out Samir’s book. You it it it it’s I was gonna say it’s a great stocking stuffer. We’re before Christmas here. It bites you air.

Someone sent me a picture with it in their stocking.

Oh, brilliant. Absolutely. Absolutely brilliant. But but, you know, stocking stuffer for next year. But get it now.

Absolutely. Get it now. It’s they’re flying off the shelf. Yeah.

Totally, completely recommended. I I as I as I as I said in my in my recommendation. But thank you, Sameer, for joining us today.

Thank you very much, Malcolm. It’s always a pleasure.

Alright. With that, if you are still here, hey. Take a moment to like. Take a moment to subscribe, give those social algorithms what they need.

We will produce this content continuously in twenty twenty six. Looking forward to having additional conversations around all things data, data strategy, you name it.

So tune in to another episode of the CDO matters podcast sometime very soon. Thanks, my friend. Thanks, everybody. See you soon.

ABOUT THE SHOW