Key Takeaways

As companies work to adopt generative AI, quite a lot of hype has built up around context in data and analytics as data practitioners work to build a semantic layer to make data useable by AI.

Context in data is important, but truth in data is just as important; it takes both to create trusted data.

Master data management is the answer to how companies can create data that reflects multiple versions of the truth to build the knowledge graphs AI needs to produce trustworthy outputs.

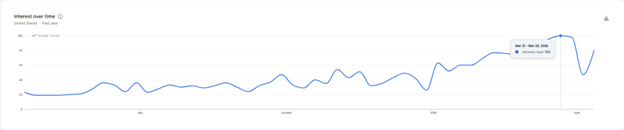

If you didn’t already know, 2026 is supposed to be the Year of Context in data and analytics. There’s plenty of evidence that those who predicted that ontologies, graphs, taxonomies and hierarchies would become a top priority for most CDOs were correct. Google Trends shows interest in the search phrase “semantic layer” peaking in March 2026 with nearly five times the interest it had in April 2025, and “context” was the one word heard most at the Gartner Data and Analytics Summit in Orlando. Given the hype around the issue of context, I see no reason this trend won’t continue through the year.

The reason for the hype is generative AI. Adding context to data transforms it from something gen AI struggles to interpret into information — something far more valuable and actionable. The addition of context enables the creation of a triple — a basic sentence describing one thing in relation to another. At scale, second and third degree relationships begin to reveal the full complexity of interactions between the things companies care most about: their customers, employees and products. Over time, this exposes a narrative of interactions that looks far more like a story than the intersection of a row and a column.

The most important characters in this story are generally referred to as master data, and understanding the relationships across them is critical to optimizing your business. Understanding your customers’ relationships with your products and content. Improving your employees’ relationships with your company. Knowing when a supplier is also a customer. And so on.

These “graphs” of interactions provide a rich layer of context that gen AI can use to ground its behavior in ways that are more predictable, accurate and less prone to hallucination. Put another way, adding context to each prompt — what many refer to as “semantics” — allows probabilistic systems to behave in more deterministic and precise ways. Rather than guess at context, the LLM knows exactly what it is.

This explains why vendors, consultants and analysts are all jumping on the context bandwagon. It also explains why a new generation of knowledge management “experts” has suddenly appeared on LinkedIn. Every other post in my feed is yet another expert talking about knowledge graphs, context graphs or some variation of a solution that relates one thing to another in a machine-readable format. I’m even hearing rumblings of companies seeking to hire so-called context engineers, which is a fancy way of describing library scientists who know some SQL.

But I digress.

Lost in the hype around AI’s insatiable demand for meaning, another equally impactful transformation is unfolding in the data management ecosystem. Alongside the focus on context, there’s a growing emphasis on trust in data. I see trust as a two-sided coin: truth on one side and meaning on the other. Trust requires both. Focusing on one without the other creates confusion. I touched on this in a recent Substack guest post I wrote for Bill Inmon, creator of the data warehouse.

In a nutshell, the concept of truth in data aligns with accuracy. Does the data reflect reality? Is your customer’s name Jon Smith or John Smith? If both appear across transactions, should they have separate customer IDs when they may be the same person? Did Jon buy 10 units or 100? Both machines and humans introduce inaccurate data all the time due to system errors, human mistakes or even ill intent.

Regardless of the cause, inaccurate data is a massive problem, and the longer it persists, the more damage it does. My friend and data quality author Tom Redman coined the phrase “the rule of ten,” which asserts that it costs ten times as much to complete a simple operation when the data are flawed in any way as it does when the data is perfect. This helps explain why Gartner estimates that poor data quality costs organizations an average of $12.9 million per year.

This makes clear that data accuracy isn’t an academic exercise but has real financial impact. What’s more surprising is how many companies still haven’t prioritized it, but that’s a topic for another article. The important point is that truth is having a renaissance, one that’s long overdue.

This shift toward truth shows up in several ways, most notably in a growing number of mergers and acquisitions — particularly the acquisitions of Informatica by Salesforce and Reltio by SAP.

While the hype around context dominates LinkedIn feeds, billions of dollars are being spent by major enterprise software providers to enable truth through investments in master data management (MDM). These investments speak volumes and serve as a clear signal to data leaders prioritizing their efforts this year.

MDM exists to deliver truth in the data shared most widely across an organization, data that often persists across siloed systems and processes, including:

- Customer data

- Product data

- Employee data

- Supplier data

Master data is the glue connecting complex, cross-functional workflows like procure to pay and quote to cash, which only run efficiently when shared master data flows seamlessly across systems.

The ability to consume data from any system, in any format and transform it into trusted, usable data across downstream systems is what makes modern MDM so valuable.

The problem is that each functional domain — marketing, finance and others — defines these entities differently. Each also relies on its own systems, like CRM for sales or ERP for finance. The result is that the most important data in the enterprise becomes both physically and semantically siloed — duplicated, inconsistent and poorly defined. Many organizations that haven’t embraced MDM cannot say with confidence how many customers they have because they lack a shared definition of what a customer is.

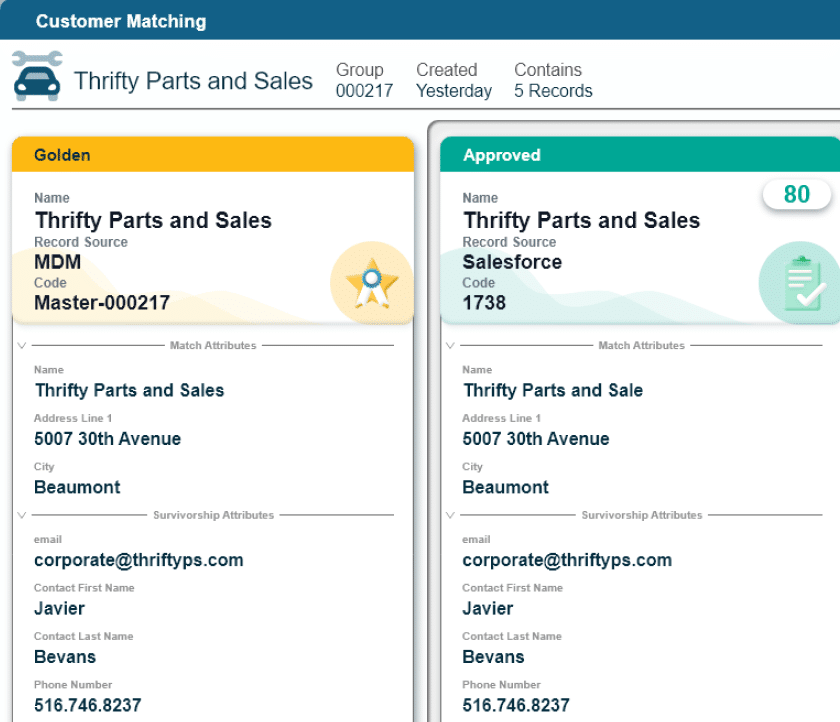

Enter MDM. MDM establishes a baseline of truth for the most important data in the enterprise. Early approaches focused on a single version of truth, but modern approaches recognize multiple versions of truth depending on how data is created and used. For example, if you run a service business, marketing may define a customer as any business entity that has ever purchased a product at any time, now or in the past. Finance may define a customer as a business entity that is currently paying for your services. Acknowledging that both “versions” of master customer record are valid, depending on your use case, is a more adaptive, and modern approach to MDM.

In a Yahoo! Finance interview, Marc Benioff, CEO of Salesforce, said that Salesforce acquired Informatica, in part, for its MDM capabilities. The press release issued on the day of the Informatica acquisition described it as bringing a “…single data pipeline with MDM on [Salesforce] Data Cloud.” The goal is to produce data that Salesforce’s Agentforce AI can trust.

Given that Reltio focuses exclusively on MDM, it’s self-evident that SAP acquired Reltio to strengthen its MDM capabilities. SAP has long offered its own lite MDM solution, Master Data Governance (MDG), but it has been tightly coupled to the SAP ecosystem and lacks the flexibility required for enterprise-wide data management. The ability to consume data from any system, in any format and transform it into trusted, usable data across downstream systems is what makes modern MDM so valuable.

These acquisitions undoubtedly highlight the importance of MDM, which has historically struggled to be seen as a business priority. For years, MDM has been overshadowed by more fashionable trends, context being the latest example.

But this year is different.

Some of the largest software companies in the world are voting with their dollars. And while attention and industry hype may remain fixed on context, these investments in MDM are reshaping the data management landscape in ways that are more meaningful and lasting than mere context without truth.

This is not to say that context isn’t important. It is. And companies are adding capabilities to support it through investments in data catalogs, semantic layers, graph databases and various other technologies. ServiceNow’s acquisition of data.world is another example. But this renewed focus on MDM and its role in enabling trust cannot be understated, especially for organizations that have treated it as optional.

If you’re a data leader with AI ambitions, these moves should serve as a wakeup call. There is nothing more important than enabling trust in both your data and the AI that depends on it — and these acquisitions are proof that MDM can no longer be ignored.

Malcolm Hawker

Malcolm Hawker is a former Gartner analyst and the Chief Data Officer at Profisee. Follow him on LinkedIn.